The Macro: The Browser Automation Gold Rush Is Real, But Most Players Are Building the Wrong Thing

The browser automation space has gotten genuinely crowded in the last eighteen months. You’ve got Manus AI, OpenClaw (which I wrote about tangentially when covering Donely’s hosting tax play), and a dozen quieter projects all chasing the same core idea: what if the agent just… used the internet the way a person does? Click, scroll, read, act.

The underlying bet is that vision-capable models have finally crossed a threshold where you can point a headless browser at a webpage, screenshot it, feed that to a multimodal model, and get back something useful. That bet is probably right. The question is whether any given team can build the orchestration layer on top of it that actually holds together at scale.

Here’s what I think most people get wrong: they assume the hard part is the vision model. It’s not. The hard part is knowing what to do when the vision model fails, which it will. That’s orchestration. That’s the moat. And almost nobody in this space is actually solving for it yet. Most are just wrapping Claude or GPT-4V and calling it an agent.

Agent orchestration as a category is attracting serious money and serious engineering talent right now. Agent 37 is going after the cost angle. CoChat is going after the team-workflow angle. Everyone has a slightly different framing for what is essentially the same core primitive: autonomous agents doing computer work without a human in the loop.

The productivity software market is genuinely enormous. Multiple sources peg the broader productivity software market well above $60 billion in 2024 and growing fast. Web automation is a slice of that, but it’s a slice that touches almost every knowledge worker workflow that involves pulling data, monitoring pages, or filling forms. The timing feels right on the surface, but I think we’re about two years too early for most of these companies to matter. The model capability is there. The business model clarity isn’t.

The Micro: ASCII Clouds and Agent Swarms, Somehow Both in the Same Product

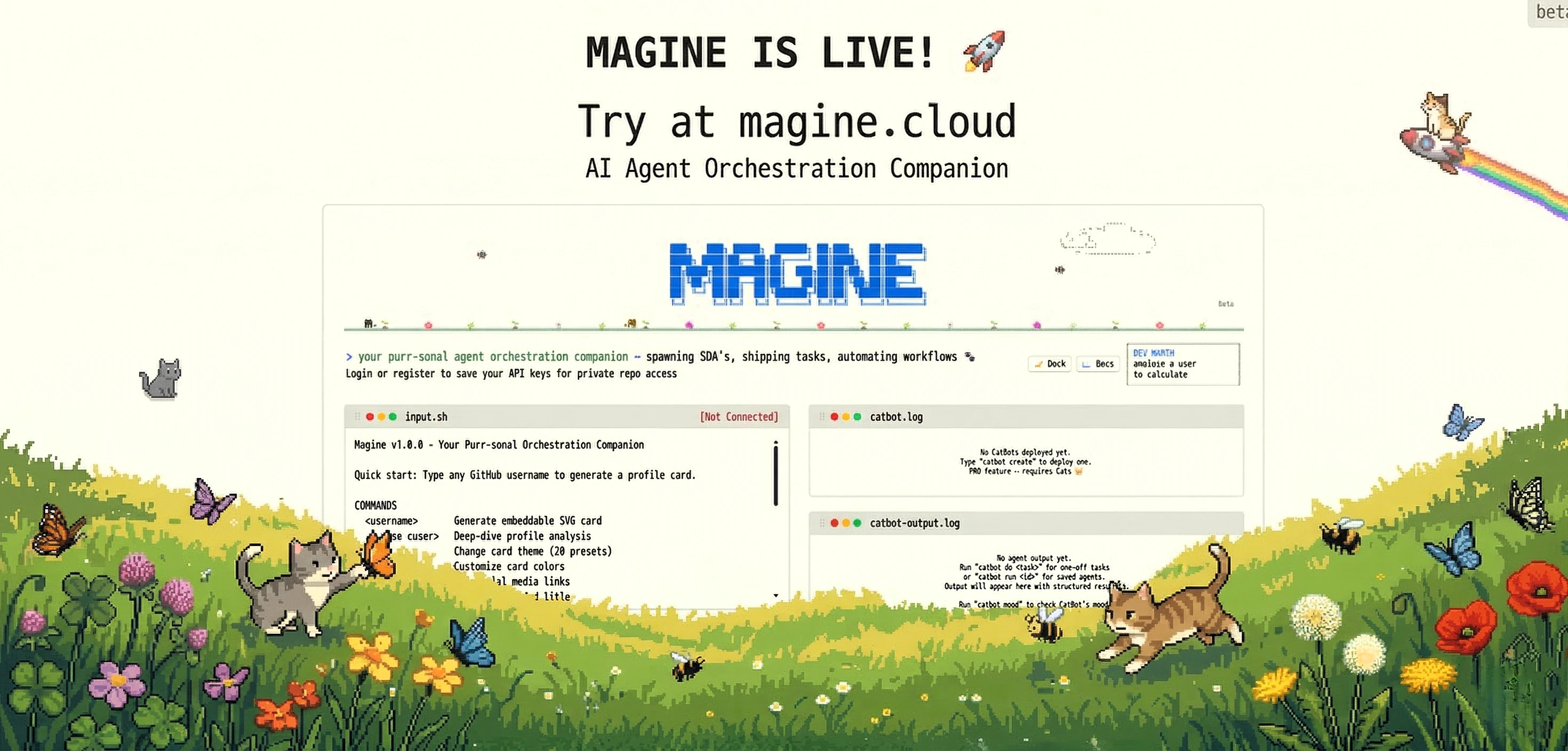

Okay so. The Magine website is something. You land on a terminal-style interface full of ASCII art clouds, bee emojis, and flower borders. The product calls itself your “purr-sonal agent orchestration companion.” The mascot situation involves cats. This is a real product that is trying to sell you on autonomous AI agents, and it has decided the vibe is cozy cottagecore hacker.

I actually don’t hate this as a choice. It’s memorable.

What Magine actually does, underneath the aesthetic: it spins up what it calls vision-enabled AI agents in the cloud, and those agents browse the web autonomously. The pitch is zero human interference. The agents can see pages (not just parse HTML), which matters for modern web apps that render content dynamically. They’re positioned at the developer-tools end of the market, not the no-code end.

The terminal interface on the website is functional, not just decorative. You can type commands. The current demo defaults to GitHub profile analysis, where you feed it a username and it generates an embeddable SVG card. That’s a narrow, concrete use case, which is either a sign they’ve scoped the MVP sensibly or a sign the broader agent functionality is further out than the tagline implies.

The token economy is interesting. You buy “Cats” ($5 gets you one Cat, which equals 5 million tokens, according to the site). The “CatBot agents” feature is gated to PRO. There’s an API key system and webhook support, which signals this is meant to be embedded in other people’s workflows, not used directly.

It got solid traction on launch day, which tracks for a dev-tools product with a distinct visual identity.

The part I’d want someone to explain to me: the tagline says “the internet will be for bots, humans are the watchers.” That’s either a philosophical statement about where AI is headed or a marketing line that sounds cooler than it is. Probably both.

The Verdict: Magine Is Too Demo-Heavy to Trust, But It’s Asking the Right Questions

Magine is doing something real, but I don’t think they’ve actually proven it yet. The gap between the vision (autonomous agents, zero human interference, the internet is for bots now) and the current demo (GitHub profile cards) isn’t just wide, it’s a chasm. And here’s what bothers me: they’re showing the easiest possible use case. GitHub is a structured platform with predictable layouts. A real agent needs to handle chaos.

The developer-tools angle is the right strategic call. Developers will tolerate rough edges if the underlying capability is genuine. The token pricing model is legible. But here’s the thing that actually matters: does the vision-browsing work on sites they didn’t train on? Because if it does, they have something. If it doesn’t, they have a well-marketed wrapper around existing APIs, which means they’re just another crowded player in a commoditizing space.

The one thing that determines whether Magine exists in two years is whether their orchestration layer can handle failure modes that the vision model throws at it. Can it recover from hallucination? Can it adapt when a page layout changes? Can it know when to ask for human help versus when to keep trying? That’s not a demo question. That’s an architecture question.

My prediction: Magine gets acquired within eighteen months by either a larger automation platform or a workflow company that wants the developer motion. They probably won’t become the standalone agent company they’re positioning for, because that market doesn’t exist yet.